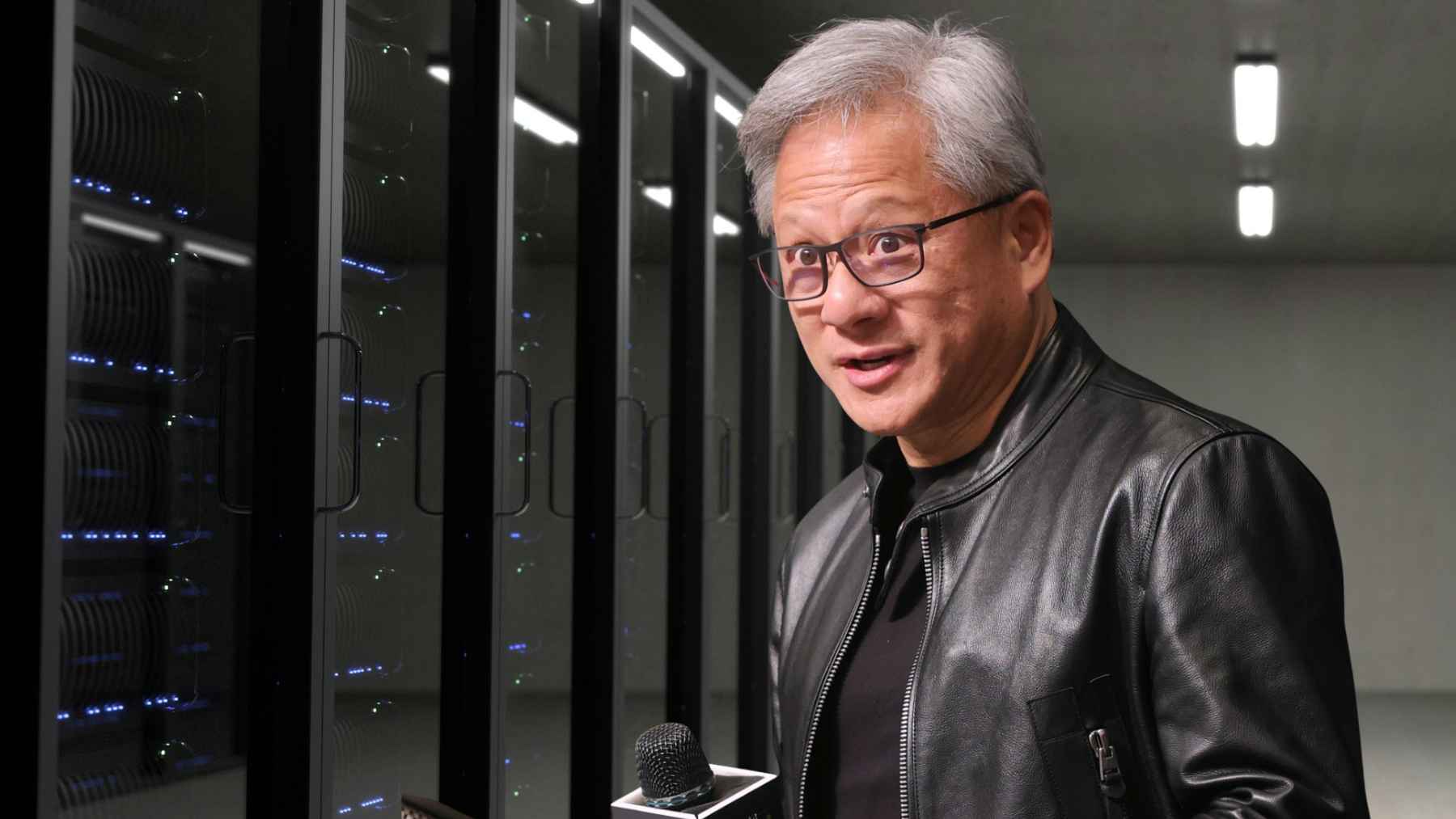

Nvidia CEO Jensen Huang used the company’s GTC conference in San Jose to push a simple idea. He called OpenClaw “the new computer,” and urged companies to build an OpenClaw strategy while Nvidia rolled out NemoClaw, a security-focused layer meant to make workplace agents feel less risky.

That message lands at a tense moment for the industry. Nvidia is betting the next big spending wave will be inference, the everyday job of running AI for real users, even as rivals build competing chips and governments worry about what agents can do once they get system access.

OpenClaw hits the spotlight

Huang said OpenClaw became popular in weeks in a way that took Linux decades, and he likened it to a Windows-style operating system for personal AI. If that sounds grand, the practical point is simpler. Agents are shifting from a chat window to software that can click, type, and run tasks across your machine.

The ripple effects showed up quickly in markets and hiring. Reuters reported OpenAI hired OpenClaw creator Peter Steinberger, while Nvidia announced NemoClaw with Steinberger and pitched it as a more secure way to run agents at work. In China, OpenClaw-linked shares jumped, with MiniMax Group and Zhipu each rising about 20% after Huang’s endorsement.

Inference is where the dollars go next

Huang said “the inference inflection has arrived,” and Nvidia is trying to put a number on it. Reuters reported the company now sees at least $1 trillion in AI chip revenue opportunity through 2027, up from a $500 billion figure it had cited through 2026.

Nvidia also laid out how it wants to divide inference work. Reuters described a two-step flow where a “prefill” stage turns a request into tokens and a “decode” stage produces the answer. Nvidia says its Vera Rubin chips will handle prefill, and Groq technology, licensed in a $17 billion deal, will handle decode in systems shown at GTC.

Security becomes the real bottleneck

An agent is only helpful if it is trusted with permissions, and that is where the anxiety starts. Reuters reported Chinese government agencies and state-owned enterprises warned staff not to install OpenClaw on office devices, pointing to risks it could leak, delete, or misuse data once granted access.

The same report said OpenClaw was uploaded to GitHub last November and has been promoted by some local governments even as central authorities issued repeated warnings.

For military, defense, and other sensitive environments, the lesson is not “never use agents.” It is that agent software should be treated like privileged access, with audit trails, tight policy, and clear boundaries around what data can be touched. Who wants a productivity tool that can turn one bad permission into a costly cleanup?

NemoClaw is Nvidia’s answer to trust

Nvidia’s NemoClaw announcement is meant to add governance on top of OpenClaw’s speed. In its press release, Nvidia said NemoClaw installs in a single command and adds privacy and security controls, including an isolated sandbox and policy-based guardrails.

It also described options to use local Nemotron models and to route some requests to cloud models through a “privacy router.”

There is also a business angle that is hard to miss. Nvidia said always-on agents need dedicated compute and pointed to platforms from RTX PCs to DGX Station and DGX Spark. In plain terms, the agent era pulls hardware purchases closer to the desktop and the server rack.

Chips, clouds, and geopolitics collide

Huang used GTC to defend Nvidia’s place in big tech stacks, even as customers like Google and Amazon pursue their own AI-critical chips and AMD promotes software meant to lessen Nvidia’s grip.

Reuters reported OpenAI had found some Nvidia chips disappointing for inference, which is awkward as the industry pushes models to spend longer generating answers. Huang’s answer was swagger, telling reporters “you are looking at the inference king.”

China adds another layer. Reuters reported Nvidia is preparing a version of its Groq-based chips for China, and that Huang said Nvidia restarted H200 production after obtaining export licenses from the Trump administration and receiving purchase orders from Chinese customers.

On the demand side, Reuters reported Amazon Web Services agreed to buy 1 million Nvidia GPUs by 2027, alongside other products including Spectrum networking and the new Groq chips.

What leaders should do before agents touch everything

Start small; then prove control. Pick workflows where actions are easy to review, keep agents away from high-risk systems, and enforce least-privilege access so they cannot wander into finance or classified files by mistake. If you are not comfortable letting a contractor click through your production systems, an autonomous agent should not get that freedom either.

Also watch the economics, because inference is a volume business. The difference between running a model locally, routing to the cloud, or mixing both can show up in the bill everyone notices — the cloud bill.

The press release was published on NVIDIA Newsroom.